The Missing Guide to Extracting Video Frames on Android

Nicolas Fez

October 2, 2025

Introduction

In a recent project for a client, I encountered a need to read various types of video sources and extract frames for further processing. These sources could range from a simple local video file to real-time network streams such as RTSP or RTP over UDP.

As developers, one of the first steps is always to evaluate the platform on which the solution will run, and to ask ourselves a fundamental question:

Has anyone already solved this problem before ?

Fortunately, in this case, the answer is YES ! 🙂

What This Article Covers

This guide begins with a short reminder of how video streams work—covering the essentials you need to know before diving into code. If you are already comfortable with the basics of video architecture, feel free to skip ahead directly to the practical section.

Looking Ahead

After the brief theoretical overview, I will first show you how to use JavaCV, a third-party library, to extract video frames. In a second step, I will present how to achieve the same results using Media3 ExoPlayer, which represents the current Android standard. This two-part approach will give you both a practical introduction to an external tool and a deeper look into the official framework.

Understanding Video Streams

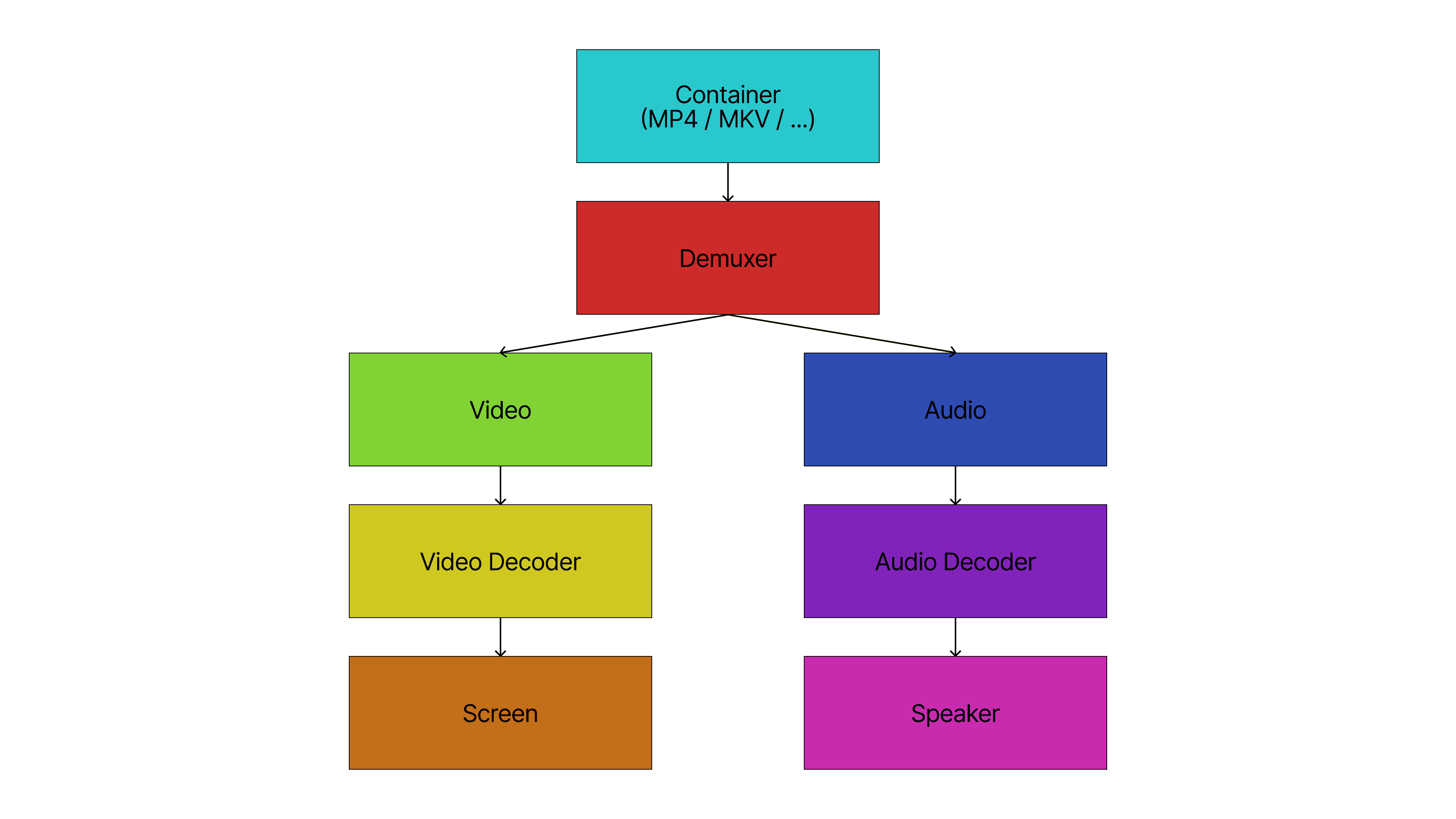

A digital video is essentially the combination of moving images and synchronized sound. To render it correctly on a device, these components must first be separated and then decoded before playback.

For example, an MP4 file contains both video and audio tracks. A demuxer is responsible for splitting these tracks. Once separated, the streams remain encoded and require decoding with the appropriate codecs. Popular audio codecs include AAC, MP3 and Opus, while common video codecs are H.264, HEVC, VP9 and AV1.

When performing the reverse operation—creating a video file—the process involves encoding the raw data and then muxing the audio and video back together into a single container format, producing a file or stream that can be shared and played.

Existing Tools

Thanks to decades of work by the multimedia community, powerful tools have been developed to handle these operations. Among the most widely used are FFmpeg and GStreamer.

Since this article will later demonstrate the use of JavaCV, it is worth noting that JavaCV relies on FFmpeg internally to capture video frames—similar to how the OpenCV library works (though on Android, OpenCV is only partially functional, as it relies mainly on the system media library and does not provide full support for reading network streams).

Transport Over Networks

RTSP (Real Time Streaming Protocol) and RTP (Real-time Transport Protocol) over UDP are network protocols that allow transmission of both video and audio data. They enable remote access to distant cameras and make it possible to retrieve real-time information, including synchronized sound and image.

Video on Android

For our example, we will focus mainly on the video part—the sequence of images. We will transform a processed video stream into individual frames, and our primary interest will be retrieving them as Bitmap objects. A Bitmap represents an image in memory that can be manipulated or displayed in the UI for further processing. While it is also possible to render frames directly onto a Surface—an Android component that provides a drawing target for video output—our emphasis here will remain on producing Bitmaps.

The Codebase

Before diving deeper into video stream analysis, we will first take a look at the permissions needed for our application, and then I will show you how our main activity will be structured. The code for this article is accessible publicly and hosted on GitHub here.

Permissions

To load video files, we need the following permissions for storage access:

<uses-permission

android:name="android.permission.READ_EXTERNAL_STORAGE"

android:maxSdkVersion="32" />

<uses-permission android:name="android.permission.READ_MEDIA_VIDEO" />VideoStreamReader Class

We will then create our VideoStreamReader class, which will have two main methods: start() and stop().

abstract class VideoStreamReader {

val observerList = mutableListOf<VideoObserver>()

abstract fun start(uri: Uri)

abstract fun stop()

fun notifyObservers(bitmap: Bitmap) {

observerList.forEach { it.onFrameReceived(bitmap) }

}

fun addObserver(observer: VideoObserver) {

observerList.add(observer)

}

fun removeObserver(observer: VideoObserver) {

observerList.remove(observer)

}

}This class defines the two core methods—start() and stop()—that will be used in our activity to control video streams.

VideoObserver Interface

As you may have noticed, we will use observers to be notified when a frame is available. Here is the implementation of our VideoObserver interface:

interface VideoObserver {

fun onFrameReceived(bitmap: Bitmap)

}With this foundation in place, we are ready to explore frame extraction using JavaCV and, later, the official Media3 ExoPlayer.

Using FFmpeg (JavaCV) for Reading Videos

Configuration

Before writing code, we need to adjust our Gradle configuration. In the android section of your app’s build.gradle.kts file, add:

packaging {

resources {

excludes += "META-INF/**"

}

}In your app’s AndroidManifest.xml file, add this line inside the application tag:

android:extractNativeLibs="true"Finally, import the library in the dependencies section of your module’s build.gradle.kts file:

implementation("org.bytedeco:javacpp:1.5.11")

implementation("org.bytedeco:javacv:1.5.11")

implementation("org.bytedeco:ffmpeg:7.1-1.5.11")

implementation("org.bytedeco:ffmpeg:7.1-1.5.11:android-arm64")

implementation("org.bytedeco:ffmpeg:7.1-1.5.11:android-x86_64")FFmpegVideoStreamReader Class

To extract frames, we use an FFmpegFrameGrabber object, which handles everything for us. After providing the URL of the file or stream we want to read, we can loop through the frames and call the grabImage() function. JavaCV also provides AndroidFrameConverter to turn a Frame into an Android Bitmap.

public class FFmpegVideoStreamReader extends VideoStreamReader {

private final AtomicBoolean isRunning = new AtomicBoolean(false);

@Override

public void start(@NotNull Uri uri) {

stop();

isRunning.set(true);

new Thread(() -> {

try (

FFmpegFrameGrabber ffmpegFrameGrabber = new FFmpegFrameGrabber(uri.getPath());

AndroidFrameConverter androidFrameConverter = new AndroidFrameConverter()

) {

ffmpegFrameGrabber.start();

while (isRunning.get()) {

try {

Frame frame = ffmpegFrameGrabber.grabImage();

if (frame == null) {

ffmpegFrameGrabber.setFrameNumber(0);

} else {

Bitmap bitmap = androidFrameConverter.convert(frame);

notifyObservers(bitmap);

frame.close();

}

} catch (FrameGrabber.Exception e) {

Log.e("FFmpegVideoStreamReader", e.toString());

}

}

} catch (FrameGrabber.Exception e) {

Log.e("FFmpegVideoStreamReader", e.toString());

}

}).start();

}

@Override

public void stop() {

isRunning.set(false);

}

}

Using Media3 ExoPlayer for Reading Videos

Configuration

Adding Media3 ExoPlayer to your project is much simpler than JavaCV. You don’t need to change anything to the configuration. You simply need to add the dependencies inside your module’s build.gradle.kts.

implementation("androidx.media3:media3-exoplayer:1.8.0")

implementation("androidx.media3:media3-exoplayer-dash:1.8.0")

implementation("androidx.media3:media3-ui:1.8.0")

implementation("androidx.media3:media3-ui-compose:1.8.0")

implementation("androidx.media3:media3-effect:1.8.0")To add support for RTSP and RTP/UDP, you can put this extra import statement:

implementation("androidx.media3:media3-exoplayer-rtsp:1.8.0")It’s worth mentioning that right now, Media3 only supports H.264 for RTSP. There’s an ongoing pull request for MJPEG which is still currently open here.

Media3VideoStreamReader Class

With Media3 ExoPlayer imported, we can now create our Media3VideoStreamReader, which is more complex than its JavaCV counterpart.

const val OUTPUT_WIDTH = 1920

const val OUTPUT_HEIGHT = 1080

@UnstableApi

class Media3VideoStreamReader(context: Context) : VideoStreamReader() {

private val player = ExoPlayer.Builder(context).build()

private val imageReader =

ImageReader.newInstance(OUTPUT_WIDTH, OUTPUT_HEIGHT, PixelFormat.RGBA_8888, 2)

private val executorService = Executors.newSingleThreadExecutor()

private val videoFrameProcessorFactory =

DefaultVideoFrameProcessor.Factory.Builder().setExecutorService(

executorService

).setGlObjectsProvider(DefaultGlObjectsProvider()).build()

private var videoFrameProcessor: DefaultVideoFrameProcessor? = null

init {

player.repeatMode = Player.REPEAT_MODE_ONE

player.addListener(

object : Player.Listener {

override fun onTracksChanged(tracks: Tracks) {

tracks.groups.forEach { group ->

if (group.type == C.TRACK_TYPE_VIDEO) {

for (i in 0 until group.length) {

val format = group.getTrackFormat(i)

try {

player.clearVideoSurface()

videoFrameProcessor?.release()

videoFrameProcessor = videoFrameProcessorFactory.create(

context,

DebugViewProvider.NONE,

ColorInfo.SDR_BT709_LIMITED,

true,

executorService,

object : VideoFrameProcessor.Listener {}

).also { videoFrameProcessor ->

videoFrameProcessor.setOnInputSurfaceReadyListener {

videoFrameProcessor.setOutputSurfaceInfo(

SurfaceInfo(

imageReader.surface,

OUTPUT_WIDTH,

OUTPUT_HEIGHT

)

)

videoFrameProcessor.registerInputStream(

VideoFrameProcessor.INPUT_TYPE_SURFACE_AUTOMATIC_FRAME_REGISTRATION,

format.buildUpon()

.setColorInfo(ColorInfo.SDR_BT709_LIMITED)

.build(),

mutableListOf(),

0

)

player.setVideoSurface(videoFrameProcessor.inputSurface)

player.play()

}

}

} catch (e: Exception) {

Log.e("Media3VideoStreamReader", e.toString())

}

}

}

}

}

}

)

}

private fun acquireLatestImage(reader: ImageReader) =

reader.acquireLatestImage().let { image ->

if (image == null) return@let createBitmap(1, 1)

val planes = image.planes

val buffer = planes.first().buffer

val width = image.width

val height = image.height

val imageBuffer = buffer.rewind()

val pixelStride = planes.first().pixelStride

val rowStride = planes.first().rowStride

val rowPadding = rowStride - (pixelStride * width)

createBitmap((rowPadding / pixelStride) + width, height).let { bitmap ->

bitmap.copyPixelsFromBuffer(imageBuffer)

image.close()

bitmap

}

}

override fun start(uri: Uri) {

stop()

imageReader.setOnImageAvailableListener({ reader ->

notifyObservers(acquireLatestImage(reader))

}, Handler(Looper.getMainLooper()))

player.setMediaItem(MediaItem.fromUri(uri))

player.prepare()

}

override fun stop() {

player.stop()

}

}As you can see, multiple components must be configured. The player manages the stream and can control playback (play, pause, loop, speed, etc…). We also listen for events, and the most important one for our use case is onTracksChanged. When triggered, we set up a VideoFrameProcessor (available via the Media3 Effect library) to retrieve the player’s output Surface and forward it to an ImageReader surface. Using ImageReader, we then convert the output to a Bitmap with the acquireLatestImage() function.

Because ExoPlayer’s output surface uses a specific format that is not directly convertible to Bitmap, the VideoFrameProcessor approach allows successful conversion without errors.

Conclusion and Comparison

With these two examples, you should now be able to read a wide range of streams and incorporate them into your native Android app. However, it is important to compare the two approaches to decide which best suits your needs.

After using both methods, I would conclude that you have to choose between compatibility and stability. JavaCV can handle virtually any format, but over long periods of use, I noticed the garbage collector was not always correctly released, leading to slowdowns. Also, you cannot easily control playback speed.

On the other hand, Media3 ExoPlayer is more limited in codec support, as it does not handle MJPEG and some other formats. However, if your stream uses H.264, then ExoPlayer is the clear choice for its stability and integration with the Android ecosystem.

That concludes this article. I hope it was useful and informative, and I look forward to sharing more in the next one.